Human‑Centered AI for Learning, Interaction, and Care

The Interdisciplinary Research Seminar on 4 March, 2026, highlighted projects at the intersection of AI, human–technology interaction, education, mobility, and care, demonstrating IT:U’s blend of methodological rigor and societal relevance. PhD candidates from IT:U’s Computational X and Digital Transformation in Learning programs presented ongoing work, reflecting the programs’ breadth from urban mobility to care technologies.

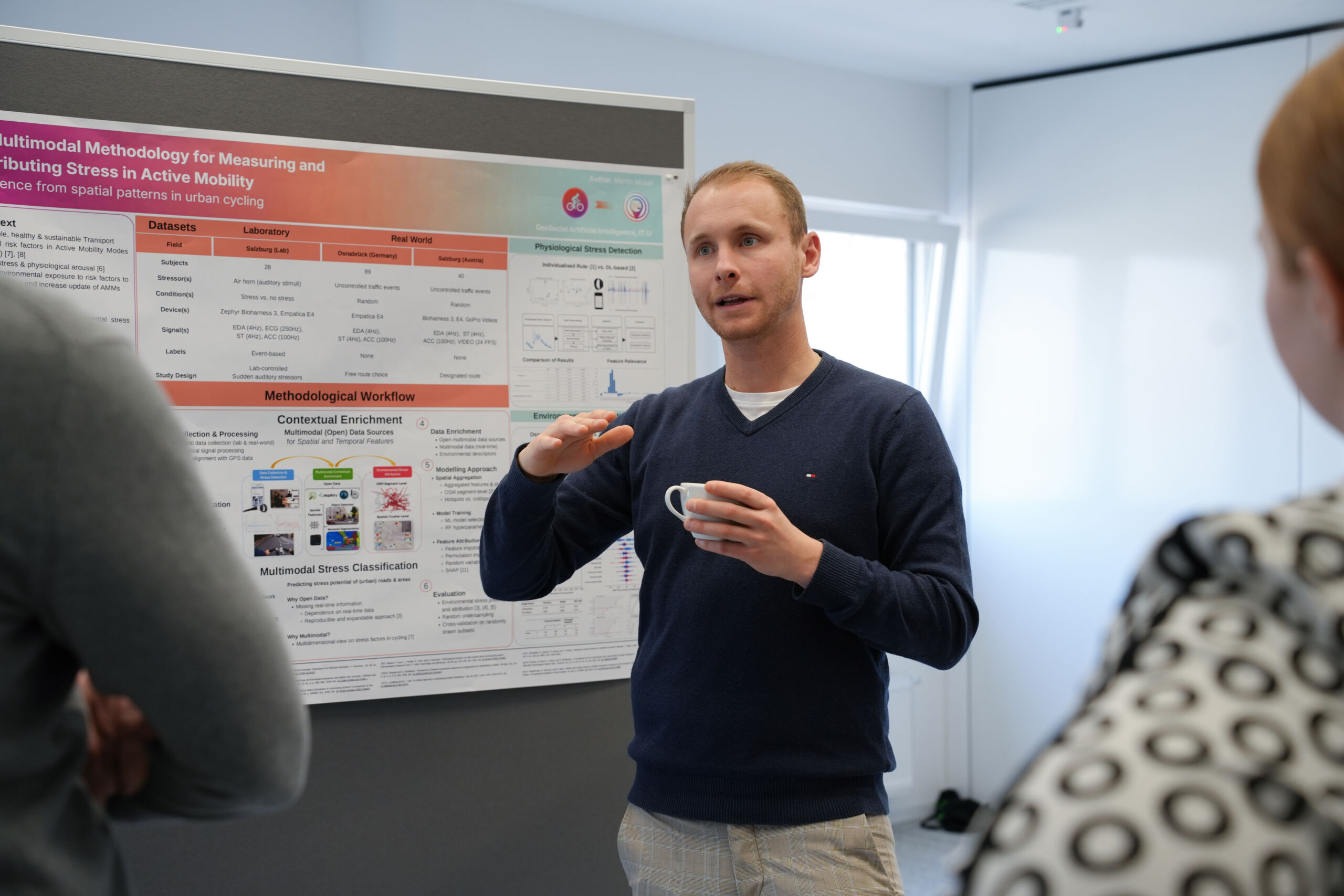

Safer cycling through stress mapping

Martin Mose from the GeoSocial AI Research Group used wearables, explainable machine learning, and GPS to spot where cyclists feel most stressed, especially at complex junctions and busy roads in Salzburg and Osnabrück. Next, they’ll add more environmental data and eye tracking to explain not just where stress happens, but why, to guide safer street design. Future work aims to clarify causes of stress for planners and designers.

From mentions to maps

David Hanny from the GeoSocial AI Research Group is using large foundation models to read text posts and infer three things at once: where an event likely happened, what the post is about, and how people feel about it. In practice, this means detecting events from posts, transferring place names to map locations, and extracting fine‑grained emotions tied to specific aspects of what’s being discussed. Next, the team will run the first systematic test and develop a new aspect‑based emotion analysis model, that can e.g. be used in crisis response.

Smarter interruptions with attention management systems

Alexander Lingler from the Intelligent User Interfaces Research Group aims to make multitasking safer and easier. The team trains AI schedulers with computational human models. In lab and mobile typing tasks with changing priorities, these systems beat self‑chosen task switching and lower workload. Results show this approach works in both stationary and mobile contexts, with next steps toward cognitively grounded driving simulations that integrate visual perception and mobile interaction.

What “autonomy” really means in Human–AI interaction

Dinara Talypova from the Intelligent User Interfaces Research Group looks into autonomy, which can be split into two parts: positive liberty (freedom to pursue your own goals) and negative liberty (freedom from constraints). The study finds positive liberty strongly boosts intention to use AI systems, while negative liberty mainly increases the sense of agency and slightly lowers usage intention, offering clear guidance for designing AI that people want to use.

How lecturers and AI share decisions

Melike Nur Köroğlu from the Digital Transformation in Learning program researches AI usage in higher education. A review of 28 studies shows AI tools in this sector mostly assist rather than replace, helping early steps like detecting issues, predicting outcomes, and generating feedback, while lecturers keep interpretation and pedagogy. A new model maps AI autonomy levels to teaching decision stages to design better collaborations. Next, a vignette study will test how to design more agentic, helpful AI for teaching decisions.

STEAM pathways to AI literacy in schools

Gala Niri from the Digital Transformation in Learning program is looking at how pupils gain AI literacy. A review of 39 studies shows rapid growth since 2021, mostly in middle and high schools. Most work builds technical skills such as AI concepts, computational thinking, data literacy, with less emphasis on ethics, creative futures, managing AI, and design. The authors propose a refreshed framework and call for earlier, more balanced learning across subjects, not just tech. They recommend tasks that combine technical fluency with ethics, collaboration with AI, and iterative design.

AI peer support for computational thinking in university

Saba Soleimani from the Digital Transformation in Learning program looks into the building of an AI peer agent to help with decomposition, abstraction, logical reasoning, and algorithm design. Early pilots show that clear task framing and manageable cognitive load are crucial for engagement, leading to refined task sets. The full study will measure performance, perceptions (e.g., trust, social presence), and interaction patterns to guide how to integrate AI peers into CT (Computational Thinking) learning.

Caring with conversation in dementia

Ralf Vetter from the Human Computer Interaction Research Group researches on AI assisted caring robots for people with dementia. Over six months, a conversational agent housed in a push‑button phone was co‑designed with people living with dementia, families, and care workers. The work shows how data, prompts, relatives’ perspectives, and device qualities shape care interactions, supporting recognition and validation, while also revealing breakdowns. It argues for care‑centred, situated design that examines how technology helps sustain personhood. The study highlights how specific design choices affect lived experiences in dementia care.

Educators’ roles in an AI‑rich university

Adrijana Krebs from the Digital Transformation in Learning program focuses on interviews with educators, students, and learning experts that show technology reshapes, not replaces, teaching. Human interaction, judgment, and context stay central. Participants value personalization and relational engagement and call for ongoing development in pedagogy and critical digital competence to integrate technology responsibly. Future phases will examine how relationships, emotions, and institutional conditions shape professional identities in AI‑rich settings.

AI as an augmentative partner: Turning research into real-world impact

Across projects, the common thread is practical, interpretable tools that support people, from cycling stress maps that result in safer infrastructure to autonomy-aware and hybrid decision frameworks in education, helping translate AI into real-world benefit. The students move toward context-aware systems that work with people, amplifying human judgment, safety, learning, and care. Overall, the Interdisciplinary Research Conference showed a coherent vision of AI as an augmentative partner: transparent, accountable, and tuned to human contexts.